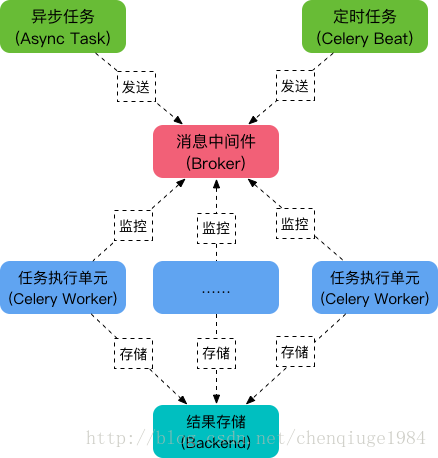

One can run create simple beat process like that: that's the class to run the beat process from import beat your app from celerytasks import app if name 'main': beat(appapp). 1 - Create a sample project, like it the one on First Steps with Django 2 - All the tasks will be correctly registered 3 - Inside the project, create a 'src' folder and move all file into it. A small research allowed me to find the answer. I have verified that the issue exists against the master branch of Celery. the beat daemon will submit the task to a queue to be run by Celery's workers. They, and the AMPQ message queue, are not directly accessible. Note that in the cloud environment, the Celery-related containers are launched automatically. if you configure a task to run every morning at 5:00 a.m., then every morning at 5:00 a.m. Locally, the four new containers will be set up as new services using the docker-compose.yml file. It is possible to write an app and start its worker process ( ): from datetime import timedeltaĬELERY_RESULT_BACKEND="redis://localhost:6379",ĬELERY_ACCEPT_CONTENT=,īut how can I start the beat process from the same module to start runnning periodic tasks (in order not to run celerybeat daemon as a separate executable)? It's important, because I'd like to use pyinstaller, so no dedicated Python interpreter will be available on client machines. Well, guys, this task is not as hard as I supposed. A Celery utility daemon called beat implements this by submitting your tasks to run as configured in your task schedule.

I've successfully learned some basics of Celery, but I've found no simple way to create single file executable (with no need to run celerybeat as a seperate process to run periodic tasks).